The Quantum Supercomputer is Moving In.

Why the perpetual "ten years away" framing is dead, and what the integration of classical and quantum hardware means for the future of infrastructure.

In 1994, the Internet was in its infancy. Secure communication, the bedrock of modern digital commerce, relied on cryptographic protocols like RSA. These protocols were—and still are—predicated on a simple mathematical asymmetry: multiplying two large prime numbers together is computationally trivial, but factoring the resulting massive integer back into its constituent primes is practically impossible for classical computers.

Then came mathematician Peter Shor.

Shor developed a quantum algorithm that proved a sufficiently large, stable quantum computer could factor these massive integers exponentially faster than any classical machine. To understand the panic and excitement this caused, one must look at the mathematical complexity.

For a classical computer using the most efficient known algorithm (the General Number Field Sieve), the time complexity to factor an integer N scales sub-exponentially:

Shor demonstrated that a quantum computer could perform the same task in polynomial time:

This stark mathematical reality meant that a functioning quantum computer could break the encryption securing the global financial system, classified military communications, and private data infrastructure. It was the ultimate motivator. Funding poured into quantum physics departments worldwide.

Thirty-two years later, in 2026, no one has built a machine capable of executing Shor’s algorithm at a scale that threatens global encryption. The problem was never the math; the problem was the physical universe.

The Decade That Never Ended

Building a quantum computer required thousands, perhaps millions, of qubits operating with error rates that no one in the 1990s or 2000s knew how to achieve. Because of this monumental physics hurdle, the “decade away” framing became a self-reinforcing prophecy.

A comprehensive report by the National Academies of Sciences, Engineering, and Medicine perfectly encapsulated this stagnation. They noted that quantum computing appeared on Gartner’s prestigious list of emerging technologies an astonishing 11 times between 2000 and 2017. In every single appearance, it was placed at the earliest stage of the “hype cycle” and categorized as being more than ten years away from commercial viability.

When the National Academies published their own definitive assessment in 2018, the conclusions offered cold comfort to optimists. The report stated:

“Significant technical and financial issues remain towards building a large, fault-tolerant quantum computer, and one is unlikely to be built within the coming decade.”

Boston Consulting Group (BCG) published a parallel analysis that same year. Their conclusion was remarkably similar, noting that the full impact of quantum computing was “probably more than a decade away,” even as they acknowledged that a closer disruption was gathering force in the near term.

This pattern of prediction was not dishonest, nor was it a failure of imagination. The problems were brutally real, rooted in the fundamental laws of quantum mechanics.

The Physics of Fragility

To understand why the timeline stalled, one must understand the qubit. Unlike a classical bit, which is definitely a 0 or a 1, a qubit exists in a superposition of states, mathematically represented as:

Here, the complex coefficients α and β represent probability amplitudes, constrained by the normalization condition:

This delicate state of superposition, combined with quantum entanglement (where the state of one qubit is intrinsically linked to another), gives quantum computers their immense parallel processing power.

However, this mathematical elegance comes at a severe physical cost. Qubits are incredibly fragile. Any interaction with the outside environment—a stray photon, a microscopic fluctuation in temperature, a passing cosmic ray, or even the electromagnetic noise from the control electronics themselves—causes the quantum state to collapse. This phenomenon, known as decoherence, introduces errors into the calculation.

Because noise accumulates as calculations progress, running deep algorithms like Shor’s requires Quantum Error Correction (QEC). Unlike classical error correction, which copies data (a process forbidden in quantum mechanics by the No-Cloning Theorem), QEC requires encoding a single “logical qubit” across dozens, hundreds, or even thousands of physical qubits.

For decades, the overhead required for error correction was insurmountable. Each time researchers hit a new physical milestone, the remaining distance to a useful, error-corrected machine stretched out before them like a mirage.

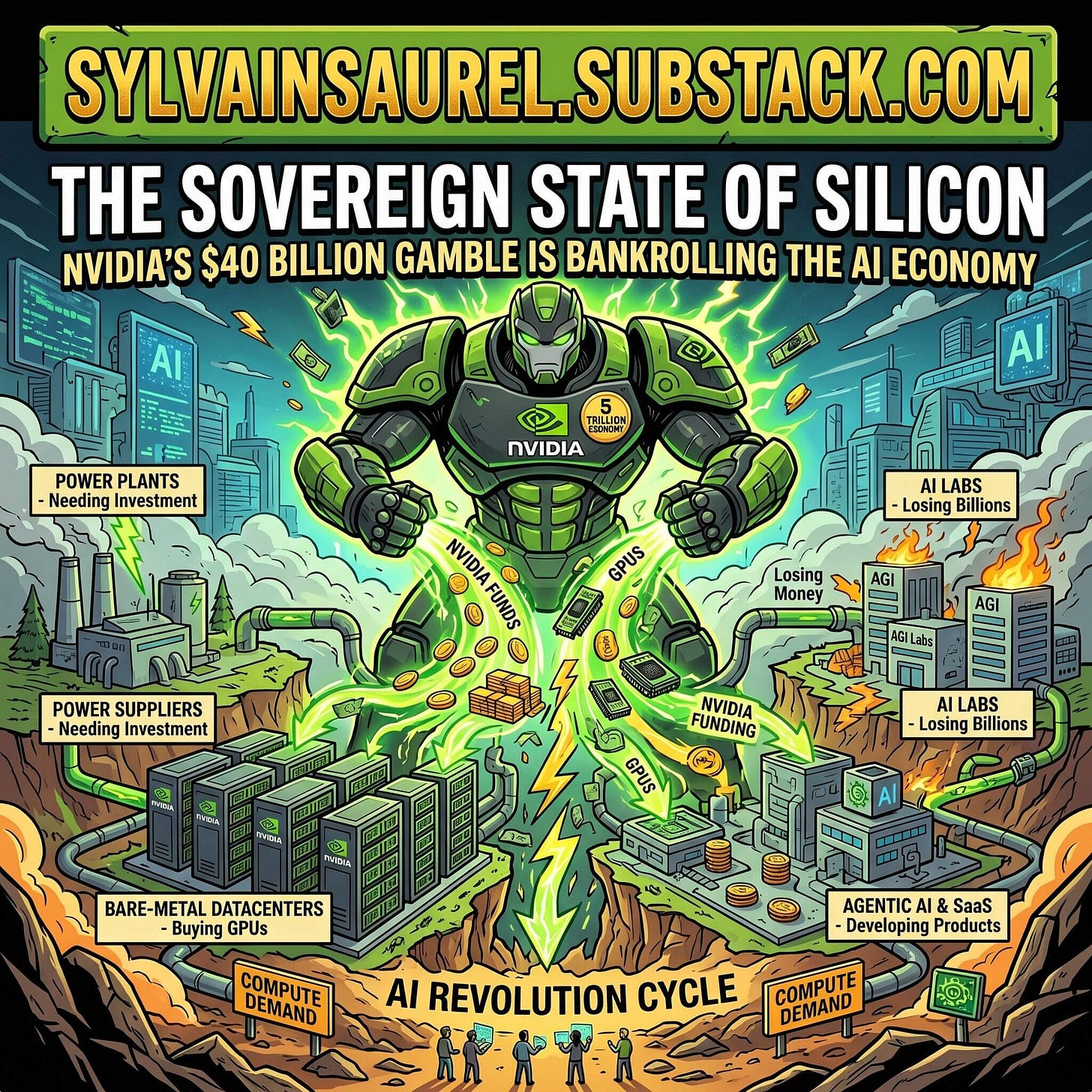

The Sovereign State of Silicon: How NVIDIA’s $40 Billion Gamble is Bankrolling the AI Economy.

As the tech industry bleeds cash in the race for AGI, the $5 trillion chipmaker is single-handedly underwriting the entire supply chain—but can one company buy an ecosystem’s profitability?

The Era of Hero Experiments: Three Milestones That Shifted the Conversation

The transition from a perpetual “decade away” to a concrete engineering schedule did not happen overnight. It was forced by three distinct, highly publicized milestones that gradually moved the goalposts from theoretical physics to applied engineering.

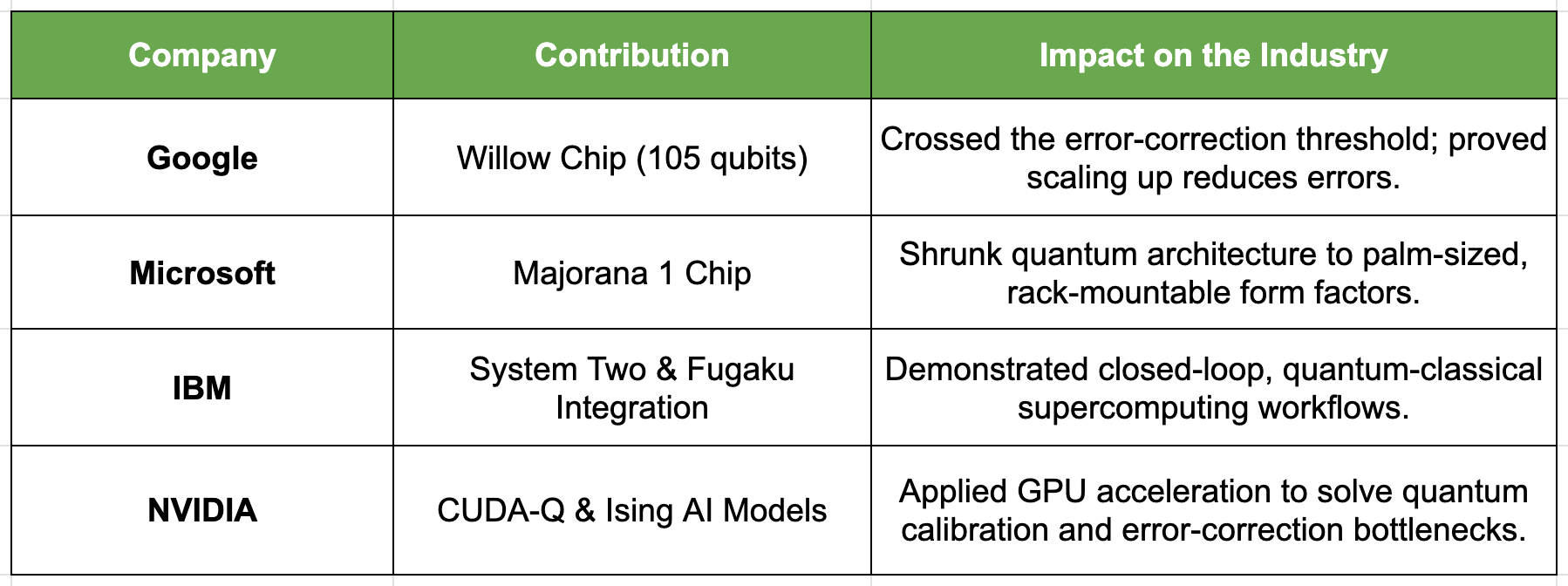

1. Google Sycamore and the Supremacy Debate (October 2019)

In October 2019, Alphabet’s Google published a landmark paper in the journal Nature. They announced that their 53-qubit processor, named Sycamore, had achieved “Quantum Supremacy”—the point at which a quantum computer can perform a task impossible for a classical computer in any reasonable timeframe.

Google claimed that Sycamore completed a highly specific, complex mathematical task (random circuit sampling) in approximately 200 seconds. They estimated that the world’s most powerful classical supercomputer at the time would require 10,000 years to simulate the same calculation.

The announcement sent shockwaves through the tech community, but it also sparked immediate commercial and scientific friction. IBM, a primary rival in the quantum space, fired back aggressively. IBM published a counter-paper arguing that Google’s claim was drastically overstated. IBM demonstrated that with mathematical optimizations and massive storage arrays, a classical supercomputer like Oak Ridge’s Summit could perform the calculation in just 2.5 days, not 10,000 years.

While the academic debate over the definition of “supremacy” dominated headlines, the true significance of the Sycamore processor was subtly overlooked by the mainstream: Google had demonstrated an unprecedented level of precise, programmable control over 53 qubits operating in tandem. It was a staggering feat of cryogenic engineering and microwave control.

2. Google Willow and the Error Correction Rubicon (December 2024)

If Sycamore was a proof of concept, Google’s announcement five years later, in December 2024, was a foundational earthquake.

Google unveiled Willow, a 105-qubit chip. While it performed a benchmark computation in under five minutes that would theoretically take a classical supercomputer 10 septillion years, the computation itself was a secondary headline. The true breakthrough lay in error correction.

For thirty years, quantum physicists had chased a mathematical concept known as the “fault-tolerance threshold.” In simple terms, the threshold theorem states that if the physical error rate p of your qubits falls below a certain critical threshold pth, you can arbitrarily suppress logical errors by simply scaling up the system.

Mathematically:

Before Willow, quantum computing was trapped above this threshold. Adding more physical qubits to a system introduced more noise, making the system less reliable. It was a devastating paradox: to build a powerful computer, you needed more qubits, but adding more qubits ruined the computer.

Willow cracked this 30-year challenge. Google demonstrated that as they scaled up the surface code (the specific error-correction architecture they utilize), the errors reduced exponentially. For the first time in human history, adding more qubits made a quantum system better, not worse. By crossing “below the threshold,” Willow transformed quantum error correction from a theoretical physics paper into a viable hardware roadmap.

3. Microsoft Majorana 1 and the Form Factor Revolution (February 2025)

Microsoft had long been the outlier in the quantum industry. While Google and IBM built superconducting qubits that required massive, chandelier-like dilution refrigerators, Microsoft placed a massive, decades-long bet on a theoretical concept called the “topological qubit.”

Topological quantum computing relies on elusive quasi-particles called non-Abelian anyons, specifically Majorana zero modes. In a topological architecture, quantum information is encoded not in the state of a single particle, but in the global, braided paths these particles take around each other. Because the information is stored globally, local environmental noise (the bane of superconducting qubits) cannot easily disrupt it.

The journey was rocky. In 2018, Microsoft faced an embarrassing setback when it had to retract a major paper claiming the discovery of Majorana zero modes due to incomplete data analysis. Many in the quantum community questioned if the topological approach was a dead end.

Majorana 1 was their vindication. Microsoft placed eight topological qubits on a new architecture called the Topological Core, designed specifically to scale up to one million qubits. However, the exact physics of the chip mattered less than its form factor.

The Majorana 1 chip, complete with its qubits and essential control electronics, fits entirely in the palm of a hand. It was designed from the ground up to be deployed inside standard server racks within Microsoft’s existing Azure data centers. Microsoft promised industrial-scale problem-solving in years, not decades. While experts debated the experimental validity of the claims, given the company’s past retraction, the engineering intent was unmistakable: quantum computing was shrinking down to fit into the enterprise cloud.

The Democratization of Quantum: From the Lab to the Cloud

Hero experiments in isolated laboratories do not spawn trillion-dollar industries. Ecosystems do. The dissolution of the “decade away” myth was driven just as much by software developers as it was by quantum physicists.

This shift can be traced back to May 4, 2016. On that day, IBM made a decision that fundamentally altered the trajectory of the industry: they connected a humble, five-qubit quantum processor to the internet and made it available via the cloud to anyone who wanted to use it.

Before this moment, utilizing a quantum computer required a PhD in physics, security clearance at a national laboratory, or tenure at an elite university. IBM’s cloud access democratized the hardware overnight.

Building the Developer Army

By making the hardware accessible, IBM birthed an entire developer community. They released Qiskit, an open-source software development kit, allowing programmers to write quantum circuits using standard Python code.

A decade after that initial 2016 launch, the metrics of this ecosystem are staggering. As of 2026, the IBM quantum cloud hosts over 240,000 active users. It supports a robust network of 300 ecosystem partners, ranging from massive automotive manufacturers simulating battery chemistry to financial institutions optimizing risk portfolios.

This vast army of developers isn’t just writing theoretical code; they are characterizing noise, testing algorithms on real hardware, and finding ways to mitigate errors through software. They transformed quantum computing from an academic curiosity into a software engineering discipline.

The Concrete Roadmap

Because IBM built a cloud business around quantum, they were forced to provide their customers with something physicists historically loathed: a predictable product roadmap.

IBM’s roadmap now extends definitively through the early 2030s, prioritizing gate fidelity and logical operations over mere qubit counts.

2029: The Starling System. IBM plans to deliver its first truly fault-tolerant quantum computer. Starling is projected to feature 200 high-fidelity qubits capable of executing 100 million quantum gates in a single circuit without terminal decoherence.

2033: The Blue Jay System. A massive leap forward, Blue Jay envisions a 2,000-qubit system capable of running one billion gates.

These numbers represent the inflection point where quantum computers move from simulating small molecules to modeling complex pharmaceuticals and materials science problems that are utterly intractable today.

The Hybrid Data Center: Where Quantum Meets Classical

The most profound shift in the quantum narrative is realizing that quantum computers will not replace classical computers. You will not have a quantum smartphone, nor will you use a quantum computer to stream video or write emails.

Quantum computers are highly specialized accelerators, akin to ultra-powerful GPUs, designed to solve specific optimization, simulation, and cryptography problems. Therefore, their success relies entirely on how well they integrate with existing classical infrastructure. The milestones of 2025 and 2026 reflect this reality.

IBM and RIKEN: Quantum-Centric Supercomputing

At IBM’s Quantum Developer Conference in November 2025, the company showcased progress toward delivering true “quantum advantage” by the end of 2026. But the most significant announcement was an architectural integration across the globe in Japan.

IBM and the Japanese research institute RIKEN unveiled the first IBM Quantum System Two deployed outside the United States. Crucially, they did not place it in an isolated physics lab. They co-located it directly with RIKEN’s Fugaku supercomputer, which is consistently ranked among the most powerful classical computing systems on the planet.

This co-location was not symbolic. The two massive machines were physically and digitally networked to run a complex quantum chemistry problem in a closed loop. The Fugaku supercomputer handled the classical data processing, identified the specific computational bottlenecks, fed those specific sub-routines to the Quantum System Two, and received the quantum-processed data back in an unbroken, automated workflow.

This is the definition of quantum-centric supercomputing. It is not a demonstration of quantum physics; it is a demonstration of enterprise-grade computing architecture. It proved that quantum machines could be trusted as reliable nodes within a broader high-performance computing (HPC) environment.

NVIDIA’s Classical Backbone

As hardware companies built the quantum chips, the king of classical AI computing stepped in to handle the traffic. NVIDIA, recognized globally for the GPUs that power the artificial intelligence revolution, identified a massive bottleneck in the quantum industry: quantum computers require immense classical computing power simply to function.

Quantum error correction, specifically the process of reading error syndromes and calculating the necessary corrections in real-time before the qubits decohere, is an unimaginably intensive classical compute problem. If the classical computer decoding the errors is too slow, the quantum computer crashes.

To solve this, NVIDIA introduced Ising, a family of open-source quantum AI models. These models span key quantum workloads, starting with the automation of calibration. Tuning a quantum processor used to take teams of post-docs weeks of manual adjustments; NVIDIA’s AI models can automate and optimize this tuning dynamically.

Furthermore, NVIDIA integrated these models into its CUDA-Q software platform and introduced the NVQLink hardware interconnect. This technology physically and logically connects delicate quantum processors directly to massive arrays of NVIDIA GPUs.

The premise is brilliant in its pragmatism: let the quantum chip handle the quantum states, and let AI-driven GPUs manage the devastatingly complex classical bottlenecks of error correction and calibration. NVIDIA’s entry into the space signaled that quantum computing had matured enough to require the world’s most advanced classical orchestrators.

What Broke the Framing: The Convergence of Disciplines

For three decades, the “decade away” framing held strong because progress was entirely legible to researchers but completely opaque to enterprise infrastructure managers.

Progress was measured in physical metrics:

T1 relaxation times

T2 dephasing times

Two-qubit gate fidelities

If you were a CIO planning a five-year infrastructure budget, these metrics were meaningless. You cannot build a business case around T2 dephasing times. You need delivery dates, rack-space requirements, cooling specifications, and API documentation.

What ultimately disrupted the perpetual decade was not a single, miraculous result. It was a rapid, aggressive convergence of hardware physics, software engineering, and data center architecture.

Let us summarize the convergence of the mid-2020s:

None of these individual milestones from 2024 to 2026 represents a complete, fault-tolerant, million-qubit machine capable of breaking RSA encryption. However, they collectively represent the end of the physics experiment phase.

When Microsoft designs a processor specifically to fit into the standard physical footprint of an Azure server rack, when IBM physically wires a quantum mainframe to an exascale supercomputer, when NVIDIA trains AI models to babysit the fragile qubits—these are milestones in engineering, integration, and supply chain management.

The 2030 Horizon: An Engineering Schedule

In 2020, the global consulting firm McKinsey published an estimate projecting that up to 5,000 quantum computers would be operational worldwide by 2030. However, they cautioned that the sophisticated hardware and software required to tackle the world’s most complex, disruptive problems might not be fully available until 2035 or later.

To a casual observer, that timeline sounds suspiciously like the same old “decade away” trope wearing a new suit. But the qualitative nature of that wait has fundamentally changed.

The quantum computers showing up in data centers in 2026 are not delicate laboratory prototypes waiting on a miraculous, Nobel-prize-winning discovery in theoretical physics to function. They are robust processors. They are connected via fiber optics and specialized interconnects to the existing global computing infrastructure. They sit in the hum and roar of data centers, right next to the CPUs and GPUs that run the modern digital economy.

Building a fault-tolerant, million-qubit quantum computer remains one of the most complex challenges humanity has ever undertaken. It requires advancements in cryogenics, microwave cabling, material fabrication, and algorithmic efficiency. But the nature of the challenge has shifted from the realm of the unknown to the realm of the difficult.

We are no longer wondering if physics will allow us to build these machines. We are simply executing the engineering schedule required to assemble them.

The “decade away” framing may still be numerically accurate for achieving a fully fault-tolerant, cryptographically relevant quantum computer. But for the very first time since Peter Shor published his groundbreaking algorithm in 1994, that decade measures a concrete, step-by-step engineering roadmap, not a distant, perpetual hope.